This tutorial expains how to perform 2D needle tracking on a needle insertion sequence of images, using a particle filtering method. See [1] for more details on the method used.

#include <iostream>

#include <sstream>

#include <string>

#include <visp3/core/vpConfig.h>

#ifdef VISP_HAVE_XML2

#include <visp3/core/vpIoTools.h>

#include <visp3/core/vpMatrix.h>

#include <visp3/gui/vpDisplayD3D.h>

#include <visp3/gui/vpDisplayGDI.h>

#include <visp3/gui/vpDisplayGTK.h>

#include <visp3/gui/vpDisplayOpenCV.h>

#include <visp3/gui/vpDisplayX.h>

#include <visp3/gui/vpPlot.h>

#include <visp3/io/vpImageIo.h>

#include <visp3/io/vpParseArgv.h>

#include <visp3/ustk_core/us.h>

#include <visp3/ustk_core/usSequenceReader.h>

#include <visp3/ustk_needle_detection/usNeedleTrackerSIR2D.h>

using namespace std;

#define GETOPTARGS "cdo:h"

void usage(const char *name, const char *badparam, const std::string &opath, const std::string &user);

bool getOptions(int argc, const char **argv, std::string &opath, const std::string &user);

void usage(const char *name, const char *badparam, const std::string &opath, const std::string &user)

{

fprintf(stdout, "\n\

Write and read ultrasound sequences in 2d image files, and the associated xml settings file.\n\

\n\

SYNOPSIS\n\

%s [-o <output image path>] [-h]\n",

name);

fprintf(stdout, "\n\

OPTIONS: Default\n\

-o <output data path> %s\n\

Set data output path.\n\

From this directory, creates the \"%s\"\n\

subdirectory depending on the username, where \n\

sequenceRF2D.xml file is written.\n\

\n\

-h\n\

Print the help.\n\n",

opath.c_str(), user.c_str());

if (badparam) {

fprintf(stderr, "ERROR: \n");

fprintf(stderr, "\nBad parameter [%s]\n", badparam);

}

}

bool getOptions(int argc, const char **argv, std::string &opath, const std::string &user)

{

const char *optarg_;

int c;

while ((c = vpParseArgv::parse(argc, argv, GETOPTARGS, &optarg_)) > 1) {

switch (c) {

case 'o':

opath = optarg_;

break;

case 'h':

usage(argv[0], NULL, opath, user);

return false;

break;

case 'c':

case 'd':

break;

default:

usage(argv[0], optarg_, opath, user);

return false;

break;

}

}

if ((c == 1) || (c == -1)) {

usage(argv[0], NULL, opath, user);

std::cerr << "ERROR: " << std::endl;

std::cerr << " Bad argument " << optarg_ << std::endl << std::endl;

return false;

}

return true;

}

int main(int argc, const char *argv[])

{

std::string opt_opath;

std::string username;

std::string logFilename;

std::string opath;

std::string windowTitle;

#if !defined(_WIN32) && (defined(__unix__) || defined(__unix) || (defined(__APPLE__) && defined(__MACH__)))

opt_opath = "/tmp";

#elif defined(_WIN32)

opt_opath = "C:\\temp";

#endif

vpIoTools::getUserName(username);

if (getOptions(argc, argv, opt_opath, username) == false) {

exit(-1);

}

if (!opt_opath.empty())

opath = opt_opath;

std::string dirname = vpIoTools::createFilePath(opath.c_str(), username);

if (vpIoTools::checkDirectory(dirname) == false) {

try {

vpIoTools::makeDirectory(dirname);

}

catch (...) {

usage(argv[0], NULL, opath, username);

std::cerr << std::endl << "ERROR:" << std::endl;

std::cerr << " Cannot create " << dirname << std::endl;

std::cerr << " Check your -o " << opath.c_str() << " option " << std::endl;

exit(-1);

}

}

std::string xml_filename;

for (int i = 0; i < argc; i++) {

if (std::string(argv[i]) == "--input")

xml_filename = std::string(argv[i + 1]);

else if (std::string(argv[i]) == "--help") {

std::cout << "\nUsage: " << argv[0] << " [--input <needleSequence.xml>] [--help]\n" << std::endl;

return 0;

}

}

if (xml_filename.empty()) {

if (!env_ipath.empty())

xml_filename = env_ipath + "/needle/water_bath_minimal_noise_png/sequence.xml";

else {

std::cout << "You should set USTK_DATASET_PATH environment var to access to ustk dataset" << std::endl;

return 0;

}

}

int n0 = 150;

#if defined VISP_HAVE_X11

vpDisplay *

display =

new vpDisplayX(I);

#elif defined VISP_HAVE_GTK

vpDisplay *

display =

new vpDisplayGTK(I);

#elif defined VISP_HAVE_GDI

vpDisplay *

display =

new vpDisplayGDI(I);

#elif defined VISP_HAVE_D3D9

vpDisplay *

display =

new vpDisplayD3D(I);

#elif defined VISP_HAVE_OPENCV

vpDisplay *

display =

new vpDisplayOpenCV(I);

#else

#error "No display available."

#endif

vpDisplay::display(I);

vpDisplay::flush(I);

vpMatrix controlPoints(2, 2);

vpImagePoint P;

std::cout << "Click on the entry point." << std::endl;

vpDisplay::getClick(I, P);

controlPoints[0][0] = P.get_i();

controlPoints[1][0] = P.get_j();

std::cout << "Click on the tip." << std::endl;

vpDisplay::getClick(I, P);

controlPoints[0][1] = P.get_i();

controlPoints[1][1] = P.get_j();

needle.setControlPoints(controlPoints.t());

std::cout << "Needle model initialized." << std::endl;

unsigned int nControlPoints = 2;

unsigned int nParticles = 200;

needleDetector.

init(I, nControlPoints, nParticles, needle);

std::cout << "Needle detector initialized." << std::endl;

logFilename = dirname + std::string("/needle.dat");

std::ofstream ofile(logFilename.c_str());

std::cout << "Results will be saved in " << logFilename.c_str() << std::endl;

ofile << nPoints;

for (unsigned int i = 0; i < nPoints; ++i)

ofile << " " << controlPoints[0][i] << " " << controlPoints[1][i];

ofile << std::endl;

vpColVector tipPose, entryPose;

unsigned int it = 1;

vpMatrix tipStd(2, 2);

vpColVector tipMean;

vpColVector evalue, evector;

vpMatrix rendering;

needleDetector.

run(I, 0.0);

cout << "Tip position: (" << tipMean[0] << "," << tipMean[1] << ")" << endl;

cout <<

"Number of control points: " << needleDetector.

getNeedle()->

getOrder() << endl;

ofile << nPoints;

for (unsigned int i = 0; i < nPoints; ++i)

ofile << " " << controlPoints[0][i] << " " << controlPoints[1][i];

ofile << std::endl;

int size = static_cast<int>(ceil(log10(n0 + 1))) + 1

char *noChar = new char[size];

snprintf(noChar, size, "%d", n0);

windowTitle = std::string("Frame ") + std::string(noChar);

delete[] noChar;

vpDisplay::setTitle(I, windowTitle);

vpDisplay::display(I);

unsigned int n = rendering.getCols();

for (unsigned int j = 0; j < n - 1; ++j)

vpDisplay::displayLine(I, (int)rendering[0][j], (int)rendering[1][j], (int)rendering[0][j + 1],

(int)rendering[1][j + 1], vpColor::red, 2);

tipStd = 0.0;

for (unsigned int i = 0; i < nParticles; ++i) {

tipStd += needleDetector.

getWeight(i) * (tipPose - tipMean) * (tipPose - tipMean).t();

if ((it % 10) == 0)

vpDisplay::displayCross(I, (int)tipPose[0], (int)tipPose[1], 3, vpColor::blue);

}

vpDisplay::flush(I);

++it;

}

#if defined VISP_HAVE_DISPLAY

#endif

ofile.close();

return 0;

}

#else

int main() { std::cout << "You should install xml2 to use this example" << std::endl; }

#endif

Needle detection in 2D images based on particle filtering.

double getWeight(unsigned int i)

usPolynomialCurve2D * getNeedle()

void init(unsigned int dims[2], unsigned int nPoints, unsigned int nParticles, const usPolynomialCurve2D &needle)

usPolynomialCurve2D * getParticle(unsigned int i)

void run(vpImage< unsigned char > &I, double v)

double getLength(int nbCountSeg=50) const

vpMatrix getControlPoints() const

vpColVector getPoint(double parameter) const

vpMatrix getRenderingPoints() const

unsigned int getOrder() const

Reading of sequences of ultrasound images.

void acquire(ImageType &image)

void setSequenceFileName(const std::string &sequenceFileName)

VISP_EXPORT std::string getDataSetPath()

To run the turorial from UsTK binary directory you can use the following command:

You will see a window displaying a ultrasound image on screen.

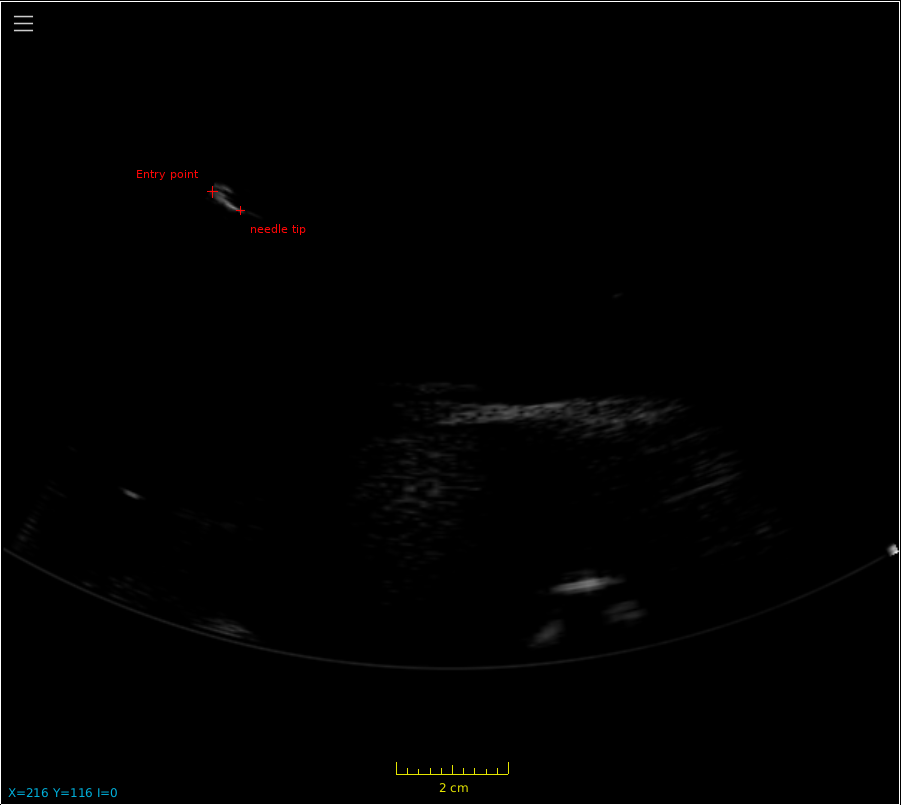

Then the first step is to initialize the tracker, by clicking on the entry point of the needle and then on the needle tip. See the image below for the needle position in the sequence used in this tutorial:

Once the tracker is initialized with your 2 successive clicks, you will see the needle tracking result on top of the images: the needle is represented with a red curve.